For many small teams, SERP features tracking tools promise a clear window into Google’s search results, a way to track features and optimize content. Yet, in practice, these tools often deliver a mirage of dashboards that look accurate but fail to reflect the reality your users see.

Understanding why this happens and how to address it requires a deeper look at the blind spots of SERP tracking tools and the nature of modern search results.

SERP Features Tracking Tools Often Mislead Visibility

If your SERP tracking tool reports improvements, but your traffic remains flat, the problem is in the tool’s measurement. Traditional tools track static rankings, assuming that a #1 position is inherently valuable. This is maybe the top limitation when using SERP features tracking tools.

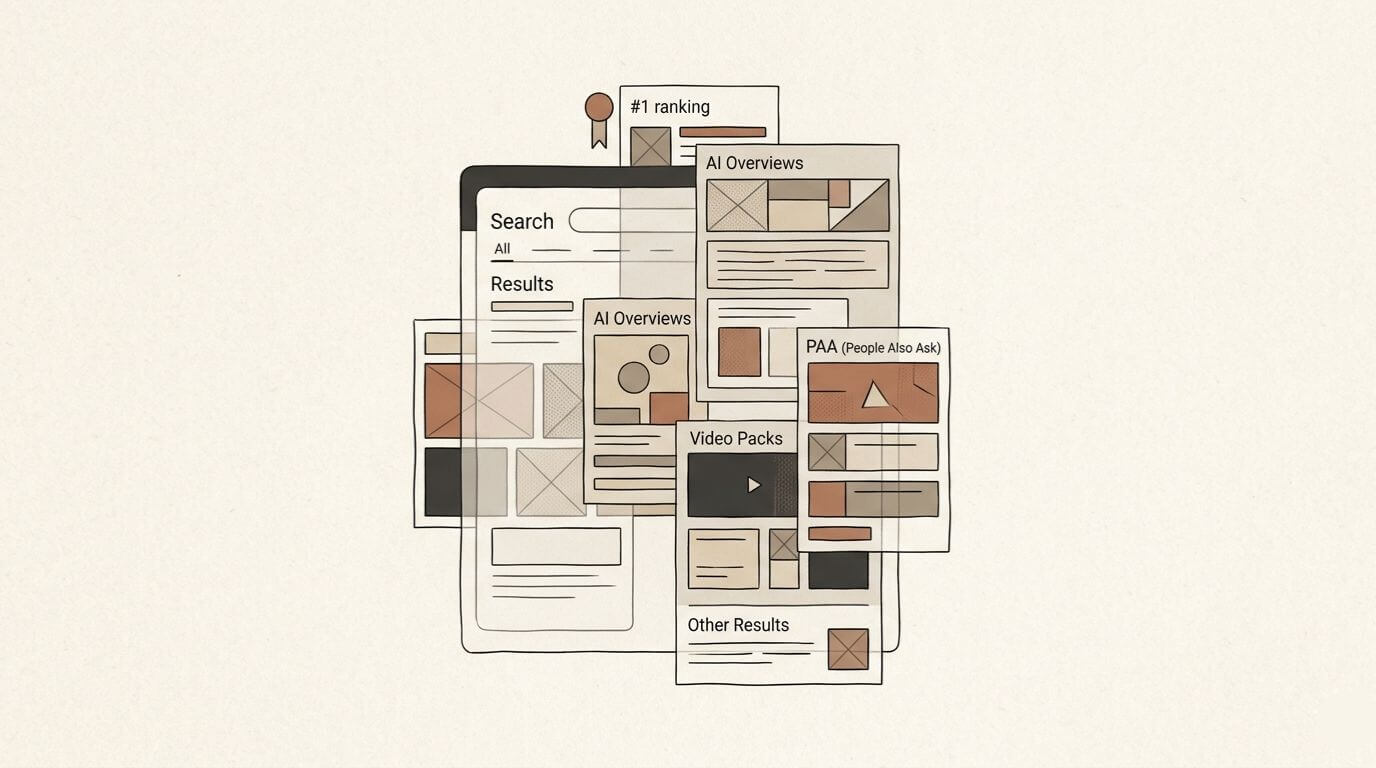

Google’s SERP has evolved. Features like AI-generated overviews and video packs have expanded, meaning your “#1” may appear below multiple features.

Example: Your page ranks #1 for a query, but the SERP now includes:

- an AI-generated summary at the top

- two PAA boxes

- a video carousel

Even though the ranking is technically #1, the slice of the SERP your link occupies is smaller. Traffic doesn’t increase because the feature-rich layout steals attention. Your tool is accurate in isolation but, unfortunately, irrelevant in context.

Field Test: Count how many rich results (videos, PAA, summaries) show up for a keyword where you rank #1. Are you truly above the fold?

Static Snapshots vs Real User SERPs

Most SERP tools capture a single “average” snapshot. Small teams often wonder how to track SERP features in a way that reflects real users, and not just the convetional “average”. In reality:

- Google personalizes results based on search history, device, and location.

- Features appear or disappear depending on query intent and timing.

- Micro-SERPs (the small variations for different users) dominate actual user experience.

If your tool reports that a snippet exists, it may be a consensus average and not what any single user sees. As a consequence, small teams relying on these snapshots optimize for an imaginary version of Google, wasting resources on ephemeral visibility.

Tracking Stability vs Exploiting Volatility

SERP tools excel at measuring stability, meaning that they focus on consistent features that are easy to report. But this can limit SERP tracking for SEO. Some of the biggest opportunities live in volatility, temporary or emerging features that the tool filters out as noise.

A new “discussion panel” feature appears for a high-intent query. Most tools don’t track it because it fluctuates daily. Early adopters who notice the pattern can capture visibility before it stabilizes or becomes saturated. Waiting for a tool to report the feature means missing the window entirely.

Small teams should shift perspective and instead of chasing averages, they should monitor early-stage SERP patterns; messy, unstable, and unpredictable signals that represent real opportunity.

Field Test: Pick a high-intent keyword and search it daily for a week. Note any transient features like panels, carousels, or snippets that appear inconsistently.

SERP Features Most Tools Systematically Miss

Even the best platforms miss emerging or experimental features. AI overviews or temporal answer boxes may not appear consistently enough to enter clean datasets.

The consequence is that small teams optimize for features that are “trackable” but ignore high-leverage opportunities that exist precisely because they are new or inconsistent.

Advantage often lies in the gaps, what Google is testing and users see inconsistently. Tools aren’t designed to detect these gaps because they prioritize repeatable data.

Presence vs Replacement: What SERP Tracking Should Measure

Most dashboards report feature presence: “Snippet exists,” “Video pack exists,” etc. This tells you what is there, but not what is disappearing.

Understanding SERP transitions is important:

- When a video pack disappears and a snippet emerges, that is a signal about intent evolution.

- Tracking replacements reveals which content types are being favored or phased out.

Small teams often celebrate winning a snippet, unaware that a new AI block has displaced the video pack that drove engagement.

Field Test: Check your SERPs for a keyword you rank for. Note if a video pack was replaced by a snippet or AI feature.

The Misleading Comfort of Averaged Data

Aggregated, de-personalized, or location-neutral SERP data can lull teams into a false sense of security. Clean datasets suggest stability, but real user experiences are chaotic.

Example: Your tool reports that a PAA box is present in 90% of queries. In reality:

- Some users see it only on mobile

- Others see different PAA entries based on past searches

- Location differences shift its visibility entirely

Optimizing for the averaged SERP means targeting a Google that no one sees. The cleaner your data, paradoxically, the further it may be from reality.

Tracking SERP Transitions

Instead of obsessing over static rankings or feature counts, teams should monitor transitions and consider how to track SERP features that indicate intent shifts.

Benefits include:

- Early detection of intent shifts: spot changes before they affect rankings.

- Proactive optimization: act on format changes rather than reacting to losses.

- Content type awareness: understand that Google rotates blocks; pages, videos, images, and AI summaries.

Example: If you track that video packs are consistently appearing for a topic, you can preemptively create video content, capturing traffic before competitors respond.

Field Test: Scan your top 5 keywords and note any sudden drop or rise in rich results. This can reveal intent shifts before rankings change.

Using AI to Simulate Real-User SERPs

For small teams, AI offers a way to approximate real SERPs without large-scale infrastructure, making AI-assisted SERP tracking practical even for lean operations. Instead of treating tools as infallible reporting layers, teams can use AI to generate multiple SERP perspectives:

- Edge cases: test device types and intents

- Scenario simulation: generate SERPs under hypothetical conditions (new features, AI summaries, etc.)

- Pattern detection: compare AI-generated SERPs to see which features emerge or dominate

If you use AI as a simulator, you can explore volatility, identify opportunities, and react faster than traditional dashboards allow.

If this is speaking to you, I’ll send the next one when it’s ready.

Building a Minimum Viable SERP Tracking System

The ideal system for small teams balances accuracy with practicality:

- Fewer keywords, more context: track patterns instead of every position, focusing on tracking Google search features that actually impact visibility.

- Manual + AI-assisted observation: a small, messy dataset interpreted correctly beats an automated, clean but misleading dataset.

- Focus on transitions: prioritize observing what changes or disappears in the SERP.

Simple workflow:

- Select high-priority queries (10–50 max)

- Record weekly SERP screenshots or AI-generated simulations

- Note emerging features, replacements, and format shifts and adjust content and targeting based on transitions

All thsi to reduce wasted effort and surface opportunities in volatility while reflecting the chaotic reality of modern search behavior.

Field Test: Look at your top 10 pages in GA4. Are any seeing sudden traffic drops that match a SERP feature change?

Rethinking SERP Tracking for Small Teams

Traditional SERP tracking tools provide value in stability and historical data, but their outputs often fail small teams attempting to capture real-world visibility. Averaged data is misleading and static snapshots ignore the dynamic and personalized nature of modern search.

Small teams can succeed by:

- Observing transitions rather than mere presence

- Exploiting volatility that tools consider “noise”

- Using AI to recreate realistic SERP perspectives

Small teams gain an advantage by embracing messy, real-time observation. Precision lies in context-aware interpretation.

Focus on dynamic understanding, and even as a lean team, you will be able to navigate the complexity of modern search and capture attention where larger teams rely on outdated assumptions.

Leave a Reply